(Comment from Amanda, 2021: Revisiting this article has made me aware of how much I learned through doing the research that fed into it and how I was spurred on by the Think Tank team to share what I’d learned.)

“You can listen to the dead with your eyes because you can read what they wrote two thousand years ago” (Dehaene, “How the Brain Learns to Read,” our DEEP lead-in video).

How can we listen with our eyes? Why do we often hear letters and words in our head when we see them on a page? And why is learning to read more difficult for the hard of hearing (Booth, 2019)? In his talk in our DEEP lead-in video, Dehaene explains that sound perception is a crucial factor in constructing the meaning of written languages. He uses scanned images of the brain to show how spoken and written language are closely connected because the same areas of the brain are used for processing both. But what is the underlying system within the brain that controls these processes, and how does this enable us to learn to read?

Working memory and the phonological loop

Experimental cognitive psychologists have been investigating the brain systems that enable us to learn to speak and read our first and subsequent languages since the 1960s, long before modern technology (fMRI and EEG scanning) allowed neuroscientists to “map cognition onto its underlying brain function” (Baddeley, 2019, p, 343). Cognitive psychologists went about this by creating theoretical models of how brain systems work and testing them on people whose brains did not function according to the models, as a result of injury, illness, or an inherited condition. In this way they were able to create and refine their models of brain systems and theories of how the brain can be trained to read.

A cognitive psychologist who contributed greatly to theory about how we learn to read by making the link between visually presented letters and the role of sound in memory was R. Conrad in the 1960s. He was studying the memorability of British post codes and telephone numbers and found that when people were asked to remember sequences of letters, errors tended to be similar in sound to the correct item. For example, b would be remembered as v even when the letters were presented visually. This indicated reliance on some kind of acoustic memory trace that faded over time. He also found that certain sequences of similar-sounding letters were harder to recall correctly than letters that sounded very different (e.g., b t c v and g were harder to recall correctly than k w x l and r). Conrad also demonstrated that people born deaf had reduced capacity for remembering and recalling visually presented sequences of numbers and letters. He demonstrated a link between this problem and the difficulty deaf people have in learning to read, and devoted his life to working with the deaf from then on. We will return to the topic of how people born deaf succeed in learning to read a little later.

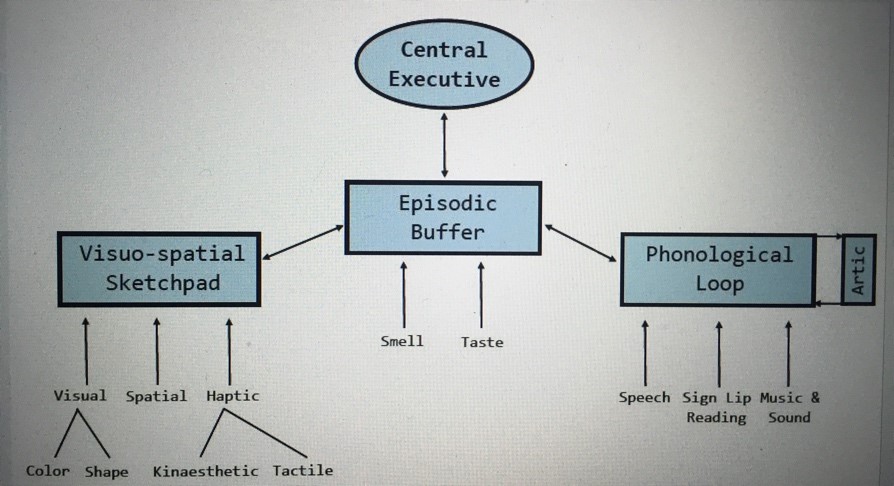

In the early 1970s, cognitive psychologists Alan Baddeley and Graham Hitch started working on the relationship between long-term memory (LTM) and working memory (WM)[1]. They realized that there were at least three components to their WM model: 1) a central executive which is linked to attention and drives the whole system, 2) the visuo-spatial sketch pad, which works with images, and 3) the phonological loop, which relies on sound.

To understand how this works, Baddeley (2013) suggests in his talk that you try to count the number of windows in the house or apartment where you live. How did you go about this? Probably, you created a visual image of your home and took a mental tour around it counting windows to yourself as you went. The central executive devised your strategy, while your visuo-spatial sketchpad provided the images, and you probably heard yourself mentally counting one, two, three … while using the phonological loop.

The phonological loop is our “inner ear” and our “inner voice,” because it stores phonological code temporarily but needs to rehearse this sub-vocally to hold the phonological code in store. For example, if we are trying to remember a phone number, we can store it temporarily, but need to keep repeating it

mentally with our “inner voice” to hear it with our “inner ear” and be able to hold it in storage. This is why they call it a “phonological” (related to sound articulation and reception) “loop” (replayed like a sound recording).

Baddeley and Hitch (1974) demonstrated the same acoustic effect with words that Conrad had found with similar-sounding letters. We can easily store up to five dissimilar one-syllable words (pot, map, sock, etc.) for a short time, but holding and remembering five short words is far harder when they are all similar in sound (cat, can, cap, etc.). Similarity in meaning but not sound has little effect on how well we can remember a sequence of short words. However, in trials with ten words to remember, meaning becomes more important for recall than sound. This suggests that the phonological loop focuses on sound and not on meaning. Of course, other parts of the WM system are using meaning, and the fact we are relying on sound doesn’t mean we don’t understand the meaning. Rather, it seems that we rely heavily on catching and holding sound in memory for as long as we need to work with that information.

[1] The term “short-term memory” had been used previously, but “working memory” indicates more clearly the dynamic nature of this brain system. Baddeley and Hitch acquired this term from Atkinson and Shiffrin (1968) but it was first used in 1960 by Miller, Galanter, and Pribam. Baddeley and Hitch wanted to highlight how working memory serves “as a cognitive workspace, holding and manipulating information as required to perform a wide range of complex activities” (Baddeley, 2019: 157).

However, if the sound loop is interrupted in some way, the memory will fade very quickly. This has been demonstrated through experiments using sequences of five longer words (e.g., refrigerator, hippopotamus, imagination). Longer words are harder to remember because they take longer to rehearse in our heads. By the time we have either read or heard the fifth word, we have already forgotten the first one. Baddeley’s (2019) results have shown that “people can remember about as many words as they can say in about two seconds” (p. 159) and that faster speakers and readers can remember more words in those two seconds because they can rehearse them more times.

Baddeley and Hitch (1974) demonstrated how the memory trace (the items to be remembered) fades away unless it can be refreshed through sub-vocal rehearsal. They did this by preventing people from rehearsing sub-vocally by making them say aloud something irrelevant while they tried to remember and recall a list of written words. (They had to repeat aloud “the,” “the,” “the,” etc. while they tried to memorize the words). They found that being unable to rehearse with the “inner voice” removes the effect of word length. It also disrupts the phonological similarity effect when material is presented visually because being unable to rehearse sub-vocally interferes with the process of turning the visual stimulus, such as written letters, into phonological code. On the question of why we forget, however, it is very difficult to demonstrate conclusively that this is because memory traces decay or because they are interfered with, and so this point remains controversial.

The role of the phonological loop in language learning

Baddeley and two colleagues (1998) wanted to find out if the phonological loop was involved in language learning. They worked with an Italian patient who had a very clear phonological loop deficit, and so they were able to investigate what she couldn’t do. She had normal intelligence, long-term memory and Italian (her native language) skills, but she could not repeat back telephone numbers. In other words, she could not listen to and then recall a sequence of nine numbers because she was unable to mentally rehearse and store them. The researchers wanted to find out if the phonological loop was a system for acquiring new language and so attempted to teach her Russian vocabulary. They presented her with a sequence of eight Russian words, both visually and auditorily, and she had to learn the Italian translations of these words. As a control, she was given the task of learning to associate eight pairs of unrelated words in her first language, Italian. This control task was based on her semantic memory (her stored knowledge of the world), which was unimpaired. They also compared her performance with that of eight other people who did not have a phonological loop deficit and who were the same age as her, and of matching intelligence. Although she had no problem learning the pairs of unrelated Italian words, at the end of ten trials, she had not mastered a single Russian word. The control group, however, had learned the Russian vocabulary with ease. This gave a strong hint that the phonological loop is involved in acquiring new language.

It’s always important to replicate experiments, but they didn’t have access at that time to another person with the same deficit. So, they used their undergraduate students and blocked their phonological loop. They did this by making the students learn vocabulary in a foreign language while suppressing sub-vocalisation (i.e., by making them repeat aloud a word like “the,” which interfered with their use of their “inner voice” to repeat the foreign words and keep them in mental storage). They found that, like the Italian woman, this didn’t interfere with paired association in their own language, but it did interfere with the acquisition of new material. This demonstrated a clear association between the phonological loop and the ability to learn a foreign language.

Baddeley et al. (1998) then looked at first language acquisition, by studying children who had normal development except that their language, especially their vocabulary size, was about two years behind what it should be. They tested these children and found that they had a particular problem in learning new and unfamiliar word forms, such as nonsense syllables. This suggested they had a phonological loop deficit. Then they asked them to listen to and repeat back non-words of different lengths e.g., ballop or much longer words like woogalamic. They found that children of the same age with age-appropriate language and other children at their language age (i.e., two years younger than them) were quite good at this (although longer words were harder). However, the children with this specific language impairment had great difficulty, particularly as the words got longer. They concluded that: “If a child cannot temporarily maintain the form of a new and unfamiliar spoken word, it is perhaps unsurprising if their vocabulary development is slower” (Baddeley, 2019, p. 243).

Furthermore, they used this Non-word Repetition Test on a sample of 118 children and found there was a robust association between their phonological loop measure and the number of words the children knew. Of course, showing that non-word repetition ability is correlated with vocabulary size does not mean that the link is causal. It could be argued that having a good vocabulary helps people cope with and repeat back unfamiliar new words. They investigated this by following up on the children they had tested over several years and found that at first, non-word repetition ability appeared to drive vocabulary growth, suggesting that the phonological loop is crucial, but as vocabulary develops, the association between a child’s phonological loop level and their vocabulary size becomes more equal, suggesting that “although the phonological loop plays a dominant role during the early years, existing word knowledge does in due course begin to help the child learn new words” (Baddeley, 2019, p. 244).

Although the phonological loop seems to provide an important tool for the acquisition of first and foreign languages, it is not the only tool. People with a reduced phonological loop, like the Italian woman, can develop extensive vocabularies, probably because later stages of language acquisition depend on other factors, such as executive resources and exposure to a rich language environment.

Baddeley’s team (1998) also tested dyslexic people and found that they tend to have both poor digit span (the ability to hear and repeat back a series of numbers) and poor performance on the Non-word Repetition Test. It is therefore highly likely that an impaired phonological loop is a contributing factor to the difficulties many developmental dyslexics experience when learning to read. The hearing and repeating back of non-words words is therefore now a standard test that is part of a diagnosis for dyslexia or specific learning impairment. It is also used to predict how well the vocabulary of children without any learning impairments will develop and is more accurate than measuring their general intelligence.

The role of the phonological loop in reading

To find out more about the role of the phonological loop in reading, Baddeley’s team asked volunteers to read sentences (like example 1 below) and decide whether they made sense or not. Half the sentences contained irrelevant words (e.g., example 2) or (example 3) had the order of words switched (Baddeley, 2019, p. 234):

She doesn’t mind going to the dentist to have fillings, but does mind the pain when he gives her the injection at the beginning.

She doesn’t mind going to the dentist to have fillings, but does mind the rent when he gives her the injection at the beginning.

She doesn’t mind going to the dentist to have fillings, but does mind the when pain he gives her the injection at the beginning.

The volunteers were allowed to read normally and then had to read while suppressing sub-vocal rehearsal by repeating aloud a word (“the”) as they read. The results showed that suppressing the phonological loop did not slow the speed at which they read, but it did increase the number of errors they made in identifying nonsense sentences. The same effect was also found when longer prose passages were used. However, other potentially distracting tasks, such as tapping a pencil while reading or ignoring spoken words had no effect on reading accuracy. It seems that sub-vocal articulation provides a backup by checking for accuracy and explained for me a mystery that I have long puzzled over. Now I understand why I hear my voice when reading something hard or something I have to pay full attention to, like proofreading. It seems my phonological loop kicks in to reinforce accuracy.

To investigate further the form that the inner voice backup takes, they tested people to find out if suppression of sub-vocal rehearsal affected their ability to judge whether a cluster of written letters represented a word (e.g., carrot) or a non-word (currot). They also asked people to decide whether two written words sounded the same (e.g., scene and seen) or different (e.g., scone and scene). They found that sub-vocal suppression did not prevent people from performing these tasks quickly and accurately and they could even judge whether non-words sounded similar (e.g., chaos and cayoss). In other words, they could still make an auditory representation with their inner ear. However, they could not decide whether words rhymed or not (e.g., bean and seen) when sub-vocal articulation was suppressed. This suggests that the need to remove the initial consonant sound before recognizing the rhyme relies on articulatory coding (the inner voice) and this is disabled when sub-vocal articulation is suppressed. This demonstrates the existence of two types of coding: the “inner ear,” based on the auditory representation of a word or non-word, and the “inner voice,” based on articulatory coding.

How people with hearing disabilities learn to read

If sound is so important in learning to read, how do people who are born deaf or hard of hearing learn to read? Tens of millions of children across the globe are hard of hearing (Booth, 2019) and most children with severe hearing loss find it very hard to learn to read. Many are able to read only at elementary school level when they graduate from high school. However, there are also many children who are deaf or hard of hearing who read very well. What are the reasons behind these great differences in reading ability? Research indicates that children who communicate predominantly in signed language use different brain mechanisms when reading compared with those who communicate predominantly in oral language. (For two very easy-to-read explanations, see Azbel, 2004 and VOA, 2011).

We have seen already in Dehaene’s talk that reading success depends initially on phonological awareness of spoken language and understanding of the visual shape-sound correspondence. The findings of cognitive psychologists have shown that building reading skill depends on the size of one’s vocabulary as well as other executive functions. Children with mild to moderate hearing loss often use oral communication as their main mode of communication and so can obtain some (degraded) phonological awareness of language to facilitate reading acquisition. Lip reading also can contribute to the phonological awareness skills in children who are hard of hearing. However, children who are profoundly deaf have little or no access to undistorted sound, and so they cannot use phonological awareness of spoken language when learning to read. So, they have to learn how to read without knowing the pronunciation of words. They need to learn instead that a certain visual sign in the sign language system they use refers to a written word. Good deaf readers are able to activate signed language automatically when reading, and recent neuroimaging research in deaf adults (Booth, 2019) has shown that those who predominantly use signed language use different brain regions when reading compared to those who predominantly use oral language.

As a result of these findings, teachers of children who are deaf or hard of hearing tailor reading instruction according to the child’s communication mode. Children who use mainly oral communication are taught how to read in a similar way to hearing children, while for children who use mostly signed language, reading instruction focuses on the signed translations of the written words. It is therefore important that children who do not have access to sound in their first months of life be provided with structured language input in the form of signed language. This puts a great deal of responsibility on caregivers, because 95% of deaf or hard of hearing children are born to hearing parents (Booth, 2019).

Vocabulary, the phonological loop, the visuo-spatial sketchpad, and EFL learners

In his talk, Dehaene describes reading as an interface, a way of connecting vision and spoken language and shows how learning to read changes the anatomy of the brain. Learning to read additional languages leads to further changes in our brain. People learning to read an alphabetic language first learn to change their mental representation of phonemes, the elementary components of speech, by gaining the capacity to attend to the individual phonemes of the language and to attribute letters to them. Once they are able to decipher the sound and letter correspondences and recognize words auditorily, they begin to build direct connections between vision and meaning, and bypass the auditory circuit. When the speed with which English L1 and FL readers are able to make these connections increases sufficiently, they achieve what Richard Day (link to his article) calls reading fluency.

The size of a learner’s vocabulary is related to their speed and efficiency in learning to read. Clearly, the more words beginner readers know, the greater the resources they have at their inner ear’s disposal when making sense of the shapes and sounds they are decoding. Learning to read an additional language, therefore, is harder than learning to read a first language because of the reduced vocabulary store available in the foreign language. Even if your learners have managed to decipher the words accurately and can hear them with their inner ear, they still cannot make sense of what they are reading if they have no idea of what the words mean.

Richard Day explains the role of Extensive Reading (ER) in reinforcing and building vocabulary. In my opinion, the aim of ER is to build reading fluency. What is this exactly and how do we recognize it in our EFL learners? In my own research (Gillis-Furutaka, 2015) with Japanese students, from beginner to upper-intermediate level, they reported that in reading ER materials free of taxing vocabulary, they start to be able to forget about the words on the page and just see the scenes unfolding in their mind’s eye (i.e., their visuo-spatial sketchpad), like a movie. This happens about the time they reach upper-intermediate level (CEFR B1), provided they are reading easy books below their actual reading level (Gillis-Furutaka, 2015). It seems that this is close to the experience of native English-speaking fluent readers as well, and marks the moment when reading a great story becomes purely enjoyable.

We have seen how the brain’s working memory system makes it possible for us to learn to recognize the sounds of our first and subsequent languages and to derive meaning from these sounds. When learning to read, sound is of primary importance because the brain learns how to match visual symbols to the sounds of the language(s) we are reading and to derive meaning through this process. A normally functioning phonological loop makes this process fast and efficient, but loss of hearing or a defective phonological loop do not inhibit the brain’s ability to learn to read. The brain is a highly plastic organ and so other brain systems, such as visuo-spatial systems or semantic memory can take over the work of the phonological loop and enable such people to read. Reading is a vital learning tool for first and additional language learners because once we have learned to read, we can begin reading to learn and can derive knowledge and pleasure from this unique human ability.

References

Atkinson, R. C., & Shiffrin, R. M. (1968). Human memory: A proposed system and its control processes. Psychology of Learning and Motivation, 2, 89–195.

Azbel, L. (2004) How do the deaf read? The paradox of performing a phonemic task without sound. Intel Science Talent Search. http://psych.nyu.edu/pelli/docs/azbel2004intel.pdf

Baddeley, A. (2013, October 31) Working memory: Theories, models, and controversies. Retrieved from https://www.youtube.com/watch?v=yL2ul2bR0Ok

Baddeley, A. (2019). Working memories: Postmen, divers, and the cognitive revolution. London, UK: Routledge.

Baddeley, A. D., & Hitch, G. J. (1974). Working memory. In G. H. Bower (Ed.), The psychology of learning and motivation: Vol. 8 (pp. 47–89. New York, NY: Academic Press.

Baddeley, A., Gathercole, S., & Papagno, C. (1998). The phonological loop as a language learning device. Psychological Review, 105, 158–173.

Booth, J. R. (2019) How do deaf or hard of hearing children learn to read? Retrieved from https://bold.expert/how-do-deaf-or-hard-of-hearing-children-learn-to-read/

Gillis-Furutaka, A. (2015). The multiple uses of the L1 when students read EFL graded readers. 京都産業大学教職研研究紀要 [Kyoto Sangyo University Education Research Bulletin], 10, 23–47.

Miller, G. A., Galanter, E., & Pribram K. H. (1960). Plans and the structure of behavior. New York, NY: Henry Holt.

Voice of America (VOA). (2011, April 21). A new reason for why the deaf may have trouble reading. Retrieved from https://learningenglish.voanews.com/a/a-new-reason-for-why-the-deaf-may-have-trouble-reading-119728279/115194.html

Amanda Gillis-Furutaka PhD, program chair of the JALT Mind, Brain, and Education SIG, is a professor of English at Kyoto Sangyo University in Japan. She researches and writes about insights from psychology and neuroscience that can inform our teaching practices and improve the quality of our lives, both inside and outside the classroom.