Among the Best of 2020

Readers chose this article as one of the five best from all of 2020! Check out the othe nominees with the button below.

The brain does so many amazing things. So, deciding which is the most amazing is not easy. Is it memory, in which even its faults are part of the design (see my piece in Think Tank on Forgetting), or emotion, the mechanism that steers us through life (see Think Tank on Emotion), or maybe even language, the tool that allowed our species to exploit the social environment (see Pagel’s TED Talk)? Any of these choices would be good, but I am going to select something else: the miraculous way the brain changes raw sensory signals into Picassos, parties, and poems.

Think about it. We are bombarded by literally millions of bits of data every second. Zimmerman’s 1986 estimate is that our sensory systems send our brains 11 million bits per second, but I wonder if that number is too low. Just for visual input, we have 126 million cones and rods in each retina, some so sensitive that they can be stimulated by a single photon. In addition to those 252 million, millions and millions of other receptors in our ears, skin, nose, gut, and tongue are also sending signals up as well. I wonder if the real number of bits per second is in the hundred millions.

Looking at this scene, I see a gray car, a street, a brown building, etc. The truth is, I am not seeing any of those “things” at the retinal level. What my brain gets is a

constant stream of neural impulses, like a huge array of blinking LED lights, just simple data with no information on source, color[1], or direction, and certainly no sense of cars and buildings. Our brains have to figure all that out by matching incoming signals to internal models of the world.

[1] Technically, color is “an illusion created by our brain” (Wlassoff, 2018). In other words, we do not detect color in the world; we paint the world with color to make it easier to decode. If this seems hard to believe, keep in mind no other creatures see color quite the way we do, if at all. Then thank me for the cognitive dissonance and look at the images Andy Clark uses in this presentation at 5:30.

Our traditional view of perception works like this: 1) We collect information through our senses; 2) It gets passed to the brain in raw form; 3) The brain analyzes it by identifying patterns similar to patterns we have experienced in the past. That is how we figure out “things.” Then, 4) the brain determines whether it is of interest to us or not and how we should react. Aha! That’s a car, maybe European. Let’s take a closer look.

In this view, the mental models that we match up to incoming sensory data are the key. How do we make such models? Well, let’s think about the one you have for classrooms.

You’ve probably seen hundreds of classrooms in movies, books, and real life. Every one was different, but they had some common features too, such as a blackboard, rickety desks, and a wide squarish shape. Those common features caused the same neural patterns to fire again and again, wiring those neurons together so that they became the internal representation, or model. A model then, is a consolidated firing pattern, just like a memory is. Our model for a classroom will have a high probability of blackboards, desks, and chairs being in it. Memory specialist, Daniel Schacter, and predictive processing guru, Andy Clark, both point out that in order to use models like this in an instant and flexible fashion, rather than being perfectly detailed, the models must be cartoon-like, and a mishmash of every previous encounter.[2]

[2] You might detest your poor memory and wish you could remember every experience perfectly, but you’d be in trouble if you could. Poor memory is a result of our brain purposely dropping details to make simple archetypal models that are easy to apply. People with perfect memory are afflicted by it. Rather than conjuring up one archetypal model for each trigger, their brains flash every individual memory, and it is exhausting.

In short, in the traditional view, we collect sensory data in the lower areas and send it up to higher cortical areas where it is matched up to models. It is basically a bottom-up process.

That seems reasonable, doesn’t it? But if you think about it, there are some problems with this view, the main one being that this means of processing would overwhelm our little 2% of body mass. With millions of incoming signals, and hundreds of millions of mental models (aka, memories) to match them up to, well, you know; it would be like going to a library to find a line you liked but can’t remember who wrote it or what book it was in. We just don’t have that much time in the real world. While I’m flipping through my huge catalogue of mental models to see if that thing in front of me is a lion, it might just eat me.

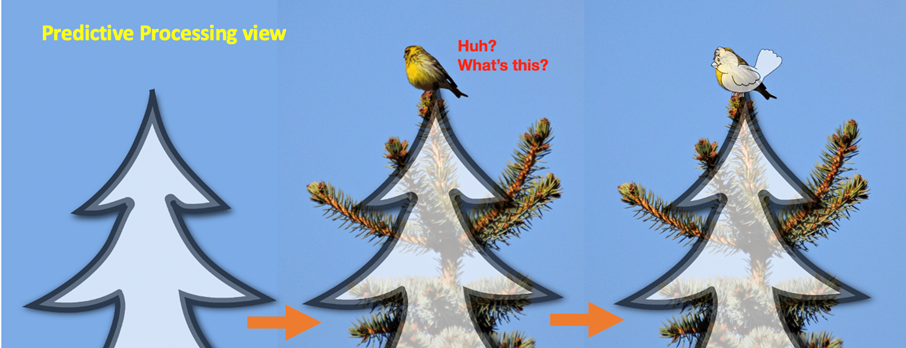

Our little brains need speed and efficiency. That is exactly why neuroscientists are tending towards another theory of how perception works that is far more efficient and elegant: predictive processing. In this view, rather than sending up a huge amount of sensory data to be processed in higher cortical layers, these higher levels of the brain predict what we are going to encounter and send the prediction down even before our senses go into action. As our sensory layers send signals up, they meet the signals of the prediction coming down and those that match the prediction are cancelled out. The sensory signals that don’t match—prediction errors—keep moving up so that those higher cortical areas can figure out what is wrong.

It is like we are putting a predictive template on the world and, once we start to collect sensory data, the data is only used to check whether the template is right. Much faster. And if you are wondering how this esoteric theory might help you get through your Monday morning grammar class, bear with us. Predictive processing connects to everything, with a special fondness for grammar.[3]

It took me a long time to really understand how predictive processing works, but once I got it, it changed almost everything I knew about the brain.

[3] I write about neuromodulation in terms of “books,” models,” “predictions,” “errors,” etc., but these are just metaphors that help us get a grasp on the way our brain processes the world.

The first important point about predictive processing is that it runs on expectations. As in the traditional view, we still have this huge library of mental models built from previous experiences, but rather than use the library to decode sensory signals sent from the bottom up, in predictive processing, we figure which of these models we are most likely to encounter next and that is the one that gets passed down.

So, even before I walk into a classroom, my brain has already gone into my library and opened the volume on classrooms. It tells me what I am most likely to encounter. This is what makes the processing “predictive” and the models “generative” since the models generate predictions and pass them down to lower levels. Okay, just so you’ll be ready, here is what you are going to see. There will be a room full of Japanese students sitting at desks, eager to hear you speak (even models can con you).

Since we send these expectations “down” in a pre-emptive fashion, I already know what’s going to happen and decoding the world is much simpler.

Our brains love to save processing power and that is exactly what predictive processing has to offer. The only things that go all the way up from the sensory levels are the things that do not match the model, what are called prediction errors. Remember that I said the model is simple and cartoon-like? In that way, we have to pay attention only to errors that are significantly different from the model, not the little things, such as the fact the students are wearing different clothes than last week. The little things get filtered out as the feedback goes to higher and higher levels, until only the most significant discrepancies remain. Since it is just the errors in the prediction that get sent back up, the upper levels do not have to bother with millions of bits per second, just the few that do not align with that volume on classrooms. The role of our sensory system, then, is just to scan for evidence that suggests the prediction is wrong.

Prediction errors that are serious enough not to get filtered out, such as half the students standing in the back of the room, will make it to the top with a huge question mark. Is this really my classroom? Yes, all the other characteristics match up. Then, why are they standing? Is there a bee in the room again like there was last year? Is someone showing photos of their new boyfriend? It is unlikely I would think a snake was why the students were standing, since my classroom model does not have snakes in it.

But what if I were walking in a forest instead? My book on forests would certainly have snakes in it, circled in red. That’s why seeing a stick, like the one below, would make me jump.

I’ve already laid down my template of a forest floor and the model says that the most likely thing I’m walking over is grass. Previous experience has set that as the highest probability. There is a lower probability of finding a stick in the grass, and that for finding a snake is even lower. However, when some initial sensory signals come in that there is something long, round, and brown in the grass, an error in my prediction, my brain bypasses the more probable sticks and assumes it is a snake. Far less probable but far more dangerous. Emotion and the flight/fight/freeze response overrides probability in this case, which, overall, has always been a sound strategy. So, the snake part of my model becomes fully activated, including the motor actions for jumping back. I do. The top also sends out orders to get ready to fight, flee, or freeze, and commands my visual system to make sure it really is a snake. On checking, new evidence gets sent back up: Hold on, it has bark! The top says Whew! and tells the autonomic nervous system to Stand down! and my parasympathetic nervous system calms me.

The incident above illustrates three additional features of predictive processing. First, predictive processing is not all top-down; prediction errors are passed back up as well. The less significant ones are filtered out on the way up, but those with a high prediction error weight—errors that show the model does not work—or carry a high emotional valence, make it to the higher areas and cause the model to be tweaked, augmented, or discarded.

Second, and this is important, all of our models have scripts for action as well, especially motor actions. When you saw that stick, you jumped back even before you consciously decided to. That action was part of the model. You also directed all your senses towards the threat in order to deal with it. In other words, all models include emotion, action plans, and affordances. After all, we don’t just have brains so that we can sit around and contemplate. Our brains exist to move us through the world and prosper.

Third, emotion can override probability in the evaluation of the model. In fact, that might help answer an important question that psychologists are asking: why people cling to ideas that are not in their best self-interest. Despite good probabilistic evidence that their model is wrong, people still refuse to wear a mask, to leave an abusing partner, or to abandon a belief that is harming them. The pain and disruption that would be caused by admitting being wrong is so great that the stick stays a snake.

So far, I have explained predictive processing in terms of visual images, but the brain uses predictive processing to understand other things, virtually everything, about the world, including cause and effect, culture, and human relations. The brain is truly a prediction machine. As Stephen taught us, it is how the brain manages the new and different. Harumi and Cooper will explain how language processing is predictive. After all, grammar is a generative model that allows us to predict what word combinations are possible. Jason will take the idea further and suggest we teach to the model rather than to the language paradigm. Finally, Caroline will conclude by offering a peek at some of the more philosophical aspects of predictive processing.

Do you see where I am going with this? In our opinion, predictive processing rules! I was setting you up by mentioning the other “amazing skills” at the start: memory, emotion, and language. I hope now you see how they are all part of, or built on, predictive processing! Many of its advocates, including the contributors to this issue, dare even say predictive processing is the Grand Unifying Theory of the brain. It explains how everything else works.

Curtis Kelly (EDD), the first coordinator of the JALT Mind, Brain, and Education SIG, is a Professor of English at Kansai University in Japan. He is the producer of the Think Tanks, has written over 30 books and 100 articles, and given over 400 presentations. His life mission, and what drew him to brain studies, is “to relieve the suffering of the classroom.”